Melbourne Museum, Tyama: When You Know, You Know

Tyama is the Keerray Woorroong language verb ‘to know’. It is about knowing, not just with our minds, but with our whole body. Sounds a lot like an immersive AV experience.

Text:/ Christopher Holder

Photos:/ Eugene Hyland & Daniel Mahon

Melbourne Museum’s Tyama is more than a highly engaging new exhibit, it’s an investment in an immersive future. Put it this way: when you spend hundreds of thousands of dollars on projection alone, you expect more than six months of ‘good feels’ from a temporary exhibition. “We were looking ahead to the next five years with this investment,” confirms Melbourne Museum’s Project Technical Lead, Richard Pilkington.

How many projectors is enough? Richard spec’ed 46. Working with projection engineers, Light Engine, it was deemed that 45 of the Panasonic solid state MZ series units was enough to entirely paint the 30m x 30m empty space, providing a notional ‘every eventuality’ number, with the 46th acting as a spare.

PROJECTION IS DEAD, LONG LIVE…

As immersive experiences become a bigger deal, projection’s star continues to rise. An LED-dominated AV future can wait as high-brightness, increasingly-compact projectors remain the go-to weapon for theming oversize spaces. “Early on in planning I had people in my ear asking ‘what about LED?’,” recalls Richard Pilkington. “I did some due diligence on that front but I was left in no doubt that projection technology was still the preferred platform on which to build our immersive future at Melbourne Museum.”

In other words, Richard needed some serious convincing to turn his back on proven museum and display technology in favour of a brave new LED world:

“I knew projectors could do what I wanted for a much better price.

“I knew that I was starting with a big, dark box, so any light was going to be under my control and I could control light from projectors far more effectively than LED.

“LED is heavy and needs extra infrastructure.

“LED creates more heat, which is a potential problem when an AV experience includes traditional exhibits, such as the moth cabinets in Tyama.

“I wasn’t as convinced by the ease in which I could stitch multiple different signals together in LED — there were still unknowns.

“Projectors are proven performers and LED would have been a case of biting off more than we could chew at this point.

“The likes of Panasonic has a whole range of product and lenses that that give us flexibility to build a bespoke project. At this point, LED forces you to design around it rather than the other way around.”

WHAT’S TYAMA?

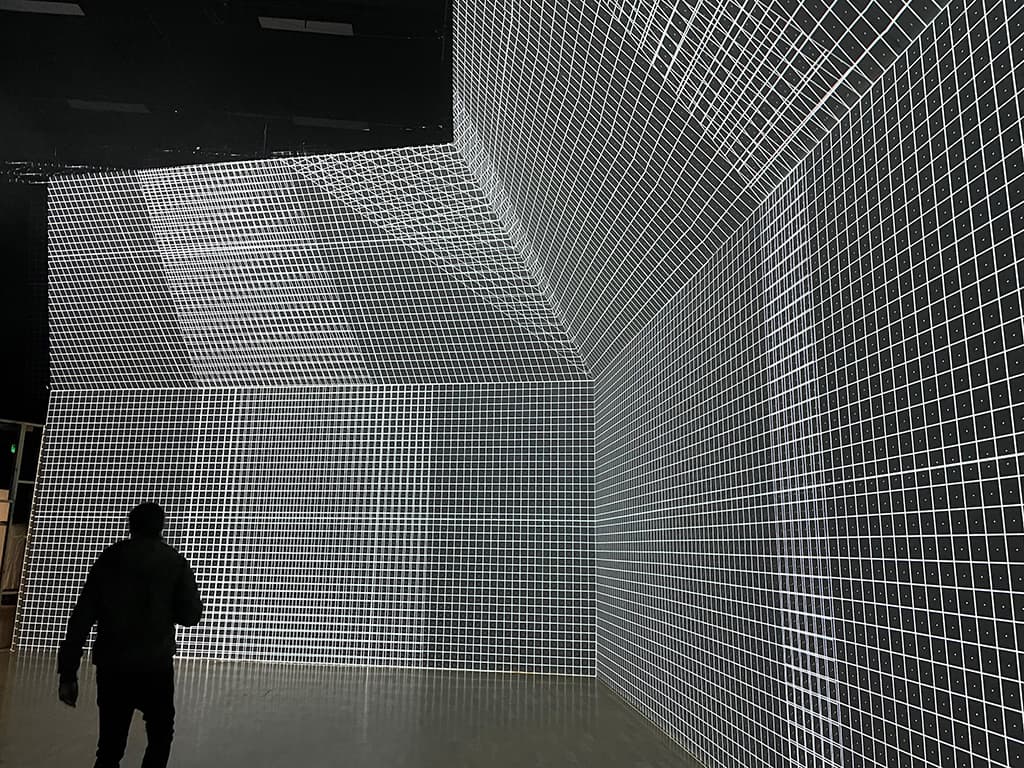

Tyama (CHAH-MUH) is the Keerray Woorroong language verb ‘to know’. It is about knowing, not just with our minds, but with our whole body. By listening closely with all our senses, we can embody these learnings and understand our place in the world. Grounded in First Peoples knowledge, Tyama blends the physical and digital to create an unmissable immersive experience to reawaken your connection to the natural world. Tyama transports you to Victoria’s nocturnal worlds, immersing you in 360° responsive projections, breathtaking effects, and exquisite soundscapes.

Moth Night Sky

Become a moth by interacting with, then attaching yourself to, one of the projected creatures. Lead your moth to the right blossom for dinner. Then stand back, slightly aghast, as your moth finds a mate and engages in some procreation.

Two Intel RealSense sensors are stitched together to cover the floor area. Eight Panasonic MZ series projectors are fed data from two Unreal Engine servers running the eight outputs. The blob track created by the sensors (fed to an Intel NUC 9 ‘sensor PC’ in the ceiling) act like a joystick or a mouse to control the movement of the corresponding moth in the video content. The video comes from the server rack where Nvidia GPUs spit out the video via Displayport into HDBaseT converters.

LIFE THROUGH A LENS

Panasonic’s range of lenses, including the ultra short throw lenses, were a significant selling point. Lumicom took care of the projector installation, led by Damien Vanslambrouck. Damien met Panasonic’s range of UST lenses when it got him out of a jam during the ACMI Museum of the Moving Image install “There was a straight wall and then it bent to a 90 degree curve. The client didn’t have budget for two projectors and the ET-DLE020 UST lens allowed us to do it with one projector. It was like AV sorcery!”

Melbourne Museum bought two of the equivalent Panasonic ET-EMU100 (0.33:1 – 0.353:1, zero offset) lenses for Tyama. “We knew ceiling space was going to be tight at times. And when you need a large image, the EMU100 has been great. With lower ceiling heights the fact the lens is zero offset means you don’t have to mount the projector higher than the top of the screen. We didn’t necessarily have a predetermined application for the two EMU100 UST lenses, we just knew they were going to save our bacon during the install. What with all the other services in the ceiling space it was impossible to reliably predict each of the mounting points. The AV guys are always the last in the queue and the EMU100 afforded us huge flexibility when things looked impossible.”

EVENTS MENTALITY

The investment in technology that you see in Tyama represents a subtle but profound switch in philosophy for the museum. The 30m x 30m blank canvas that Tyama occupies will play host to future immersive exhibitions. For Richard, it’s taking on more of an AV events mindset towards museum exhibits, namely keeping a stock of technology that can be repurposed and will be equally applicable year on year. The arsenal of Panasonic projection is the most conspicuous aspect of the grand plan but the lighting, the truss, the audio, media servers, the RealSense sensors and sensor UI, and the HDBaseT baluns all play a big role in the future of immersive at Melbourne Museum.

“We have an amorphous box that we’ll always start with, and I wanted to invest in elements from there,” explains Richard Pilkington. “The events approach resonated with me. That said, Tyama is operating seven days a week from 9 to 5. So the equipment is worked constantly for a six-month period and this necessitates some control and monitoring overlays you mostly don’t need in an events situation.”

That control and monitoring comes from Nodel.

Bat Cave

Visitors become bats, triggering echo location ‘pings’ to navigate the tunnel. Melbourne Museum commissioned AX Interactive to build a UI that allows AV staff to visualise the use of multiple sensors. In this case, the Bat Cave uses audio sensors (microphones). RME mic preamps feed the NUC sensor PC, which prioritises the loudest clap sound coming from the four mics. The Unreal engine subscribes to the sensor data: the loudest clap originated from ‘Quadrant 4A’, say, and Unreal will send content down the HDBaseT line to the relevant projectors to display the echo location graphics and send a message to QLab to play the interaction sound through the multichannel audio setup. Bat Cave employs four Panasonic MZ series projectors and 16 loudspeakers.

USING THE OL’ NODEL

Melbourne Museum commissioned Lumicom to author Nodel for them around 10 years ago. It’s an open source IP control system widely adopted by (mostly) creative institutions around the world. “It’s primarily designed for museums but we use it across all our projects now, including corporate jobs,” confirms Lumicom’s Damien Vanslambrouck. Nodel might be open source but Lumicom, as the author, remains the primary caretaker of the system and works with Melbourne Museum on ‘recipes’ — Nodel-speak for bespoke macros, UIs, control panels and the like. These recipes are then shared to the Nodel community.

LINGO: MORE PROTOCOLS

Nodel might act as the museum’s ultimate control ‘ring master’ but it doesn’t stop Richard and the team from leveraging other pieces of control software that best suit the application.

“I use the OSC [Open Sound Control] a lot on this project. It’s a nodal Recipe that fires OSC commands backwards and forwards out of the Unreal Engine [a games engine that masterminds the multimedia environment] to trigger the audio cues in QLab. The Unreal Engine does not do spatial audio very well, so this approach has definitely proved superior.”

Audio out of QLab uses Dante audio-over-IP. From there it’s either Dante direct to the PoE Genelec active loudspeakers or to eight-channel Yamaha XMV Dante amplifiers, then onto Yamaha installation loudspeakers via speaker cable.

“The Genelec speakers run off PoE which make them more flexible in tight spots, like the underfloor of the Shallows. Horses for courses, really,” comments Richard Pilkington.

HDBaseT rules uncontested in an application such as Tyama — 45 runs to 45 projectors. Richard elaborates: “I filled the hole in the wall that goes from the comms room into the exhibition space with 45 runs of Cat6 shielded cable. There wasn’t enough room to fit another 45 individual network runs for control, so we put the control over the HDBaseT runs, using Panasonic’s Digital Link. It allows me to monitor and power up/down the units as well as send signal. Digital Link has definitely reduced the complexity of the system in a really important way. We’re not dealing with two products or two different IPs… it’s streamlined.”

“”

LED forces you to design around it rather than the other way around

Shallows

Join a shoal of anchovies, then heartlessly lure them to the shallows where they are savagely devoured by an array of predators, such as a stonefish and hungry gannets. Some 14 Panasonic MZ series projectors paint every surface of a geometrically complex space, including five for the inner cylinder alone. Four Intel RealSense sensors feed one Intel NUC 9 Extreme to provide the ‘blob track’ for interactive data. The audio cues go to the QLab server, which plays/triggers the sound effects and the backing track over the seven Genelec PoE speakers and Yamaha passive speakers. Twenty-two Genelec PoE loudspeakers and Yamaha passive loudspeakers take care of the audio immersion including upward firing speakers in the floor to bring the audio image down to where the action is.

MAKING SENSE OF REALSENSE

Richard Pilkington and his team selected Intel RealSense depth cameras for Tyama. Wikipedia explains RealSense thus: Intel RealSense Technology is a product range of depth and tracking technologies designed to give machines and devices depth perception capabilities. The technologies, owned by Intel are used in autonomous drones, robots, AR/VR, smart home devices amongst many others broad market products. Richard makes this observation: “A Kinect will aim to map a person’s skeleton while RealSense is designed to be more like a machine vision. We’re mostly pointing the cameras from the ceiling to the floor and we’re after an AI ‘blob’ created by a person’s presence.”

Melbourne Museum used AX Interactive to design a sensor ‘mapping’ software tool to visualise multiple sensors in much the same way as you would with projection mapping tools.

Richard Pilkington: “With the AX Interactive UI you can look down from the camera view and overlay the two cameras on top of each other to get the best blob-tracking picture. From there you can adjust the tracking height and the depth and how big the blob is. Worthy of note: the Panasonic projectors don’t emit a frequency that interfere with the IR cameras. We’ve got both of them pointed at the ground in the same way and they work in tandem, without stepping on each other’s toes.”

Richard suspects that the stitching of the two RealSense cameras together is a first. The AX Interactive software allow he and his team to fine tune the sensor mapping as they go without hiring in specialised assistance.

The sensor data stream is intense, but the Intel NUCs have proven themselves worthy. Richard Pilkington explains: “The intel NUC 9 Extremes have proven to be very capable at handling the huge torrents of sensor data. Initially I thought I’d run one NUC per sensor. Turns out each NUC can handle multiple USB3.1 streams of sensor data. We have four sensors running into one NUC in the Shallows, for example. It does mean we need to use pricey, specialised 10m USB-C cables for those additional IR cameras.”

Whale Room

Huge 8m-high walls provide a colossal canvas for the gentle giants of the sea. 15 Panasonic MZ series projectors are employed. Fourteen are used in portrait orientation. The horizontal pixel count is immense. Richard Pilkington: “The light bouncing off a projection screen in an otherwise dark room is very different to a light emitting diode. It’s easier on the eye and, as a result, it’s easier to get immersed in that environment. ‘Just turn down the LED output,’ you might say. But I know those panels aren’t designed with that in mind.”

Panasonic’s Incontrol software is used to do the image fade for the projection blend, while the Vioso media server takes care of the actual image blend. Richard Pilkington: “By using Panasonic Incontrol blending I can fix the drift that inevitably happens over time, without going back through that process of a blend in Vioso. In my view, it just reduces the number of things that can possibly go wrong.”

MEASURE OF SUCCESS

A big immersive experience like Tyama, by its very nature, has a different impact on different people. Some are happy to stand to one side and take it all in; others (mostly kids, if we’re honest!) immediately understand the mechanics of the interactions and delight in it; and there are myriad responses in between. Having spaces that allow up to 20 people to simultaneously interact with the content is pretty special and demonstrates how AV has the power to bring people together through wonder and shared experiences.

CONTACTS

Melbourne Museum: museumsvictoria.com.au/melbournemuseum

Brendan Woithe, Klang (immersive atmospheres, music & sound effects): klang.com.au

Aaron Marshall, Light Engine (projection engineers): lightengine.video

Lumicom (projector installation): lumicom.com.au

AX Interactive (sensor UI): axinteractive.com.au

KEY SUPPLIERS

Panasonic (projection): panasonic.com.au

Yamaha (amps, loudspeakers): au.yamaha.com

Studio Connections (Genelec): studioconnections.com.au

Unreal Engine: unrealengine.com

ITI Image Group (Vioso): iti-imagegroup.com

RESPONSES